Real-Time Material Flow Estimation

A custom neural network running on embedded hardware, counting discrete units with sub-1% error at production flow rates.

Count error at optimal flow rate

Theoretical RMSE after temporal smoothing

Edge inference - no cloud dependency

When a machine dispenses thousands of small items per minute, knowing exactly how many have passed through is critical - for dosing accuracy, quality control, and process traceability. The problem is that at realistic operating speeds, items overlap and cluster, making a simple direct count unreliable. A smarter approach is needed.

We built a system that continuously analyses the machine's sensor signals and estimates flow rate in real time, accumulating an accurate total count without ever interrupting the process. It runs directly on the machine's embedded hardware - no cloud connection, no latency - and achieves errors below 5% across the primary operating range.

The Challenge

Due to a non-disclosure agreement, we are unable to share further details about the specific application or customer.

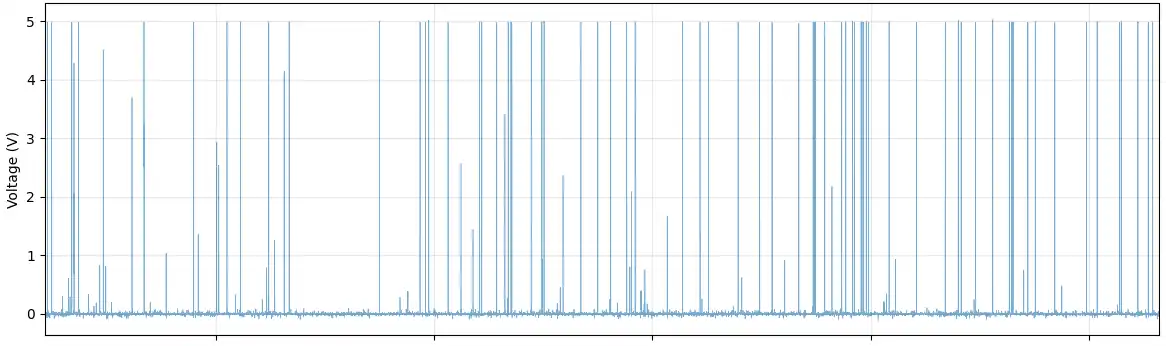

The hardware includes multiple analog sensors along a dispensing channel. As discrete units pass by, each sensor produces a continuous signal - but at higher flow rates, units overlap and interfere with one another, making direct pulse-counting strategies unreliable.

The core problem: infer instantaneous flow rate (units per second) from multi-channel signals, then integrate that estimate over time into a total count. The system must run in real time on an embedded device with no cloud connectivity.

Why Standard Regression Fails

Data exploration revealed a critical non-linearity: at low flow rates, units pass individually (discrete regime) and signals scale predictably. At higher flow rates, units overlap and saturate the sensors (chaotic regime). This physical phase transition makes standard regression models insufficient.

Dimensionality reduction confirmed the non-linear structure: PCA captured only ~30% of variance, while t-SNE revealed two clearly separated clusters corresponding to the two physical regimes. A non-linear model was needed.

Our Approach

Data Pipeline

Raw multi-channel signals were acquired at ~20 kHz. After downsampling and efficient format conversion, we achieved a 93% reduction in data volume (40 GB → 2.9 GB) with no loss of task-relevant information. A sliding window strategy (250 ms windows, 50 ms hop, 80% overlap) generates a smooth, real-time flow rate estimate suitable for edge deployment.

Feature Engineering

We extract features from three complementary domains:

Time-Domain

- Normalized pulse count and duty cycle

- Pulse duration and inter-pulse interval statistics

- Signal energy: RMS and total integrated area

Frequency-Domain

- Spectral centroid and bandwidth

- Power in defined frequency bands

- Mel-frequency cepstral coefficients (MFCCs)

Cross-Channel

- Maximum cross-correlation between channel pairs

- Lag at peak correlation and spectral coherence

- Simultaneity count across channels

Permutation importance analysis confirmed that energy-based features - Total Integrated Area and RMS - are the most predictive of flow rate across both operating regimes.

Model Architecture

Given the multi-regime physics, a fully-connected Artificial Neural Network (ANN) was chosen over classical regression. Architecture and hyperparameters were optimised using KerasTuner with the Hyperband strategy - an efficient search method that explores a wide design space while early-stopping under-performing configurations. The final model converged smoothly without overfitting.

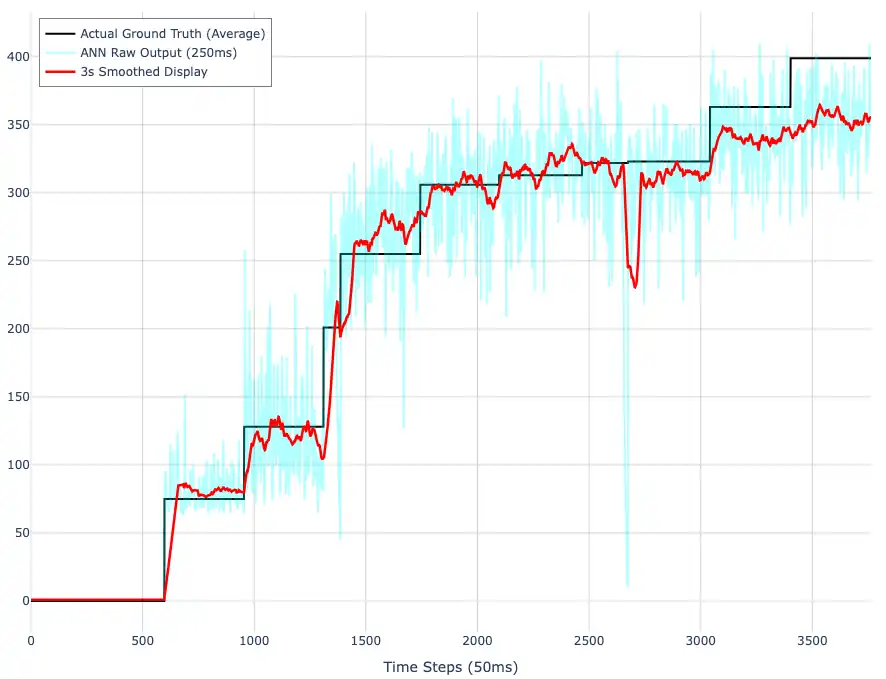

From Rate to Count

Instantaneous flow rate estimates are integrated using a Riemann sum, with careful handling of the hop size to avoid double-counting from overlapping windows. A 2-second moving average smoothing filter reduces theoretical RMSE from ~20 units/s (raw ANN output) to ~3.2 units/s - a 6× improvement.

Results

The model was validated across the full operating range of the machine:

| Flow Rate | Count Error |

|---|---|

| Low (~80 units/s) | +10.2% |

| Medium (~200 units/s) | +4.6% |

| High (~300 units/s) | −0.8% |

| Very high (~400 units/s) | −12.1% |

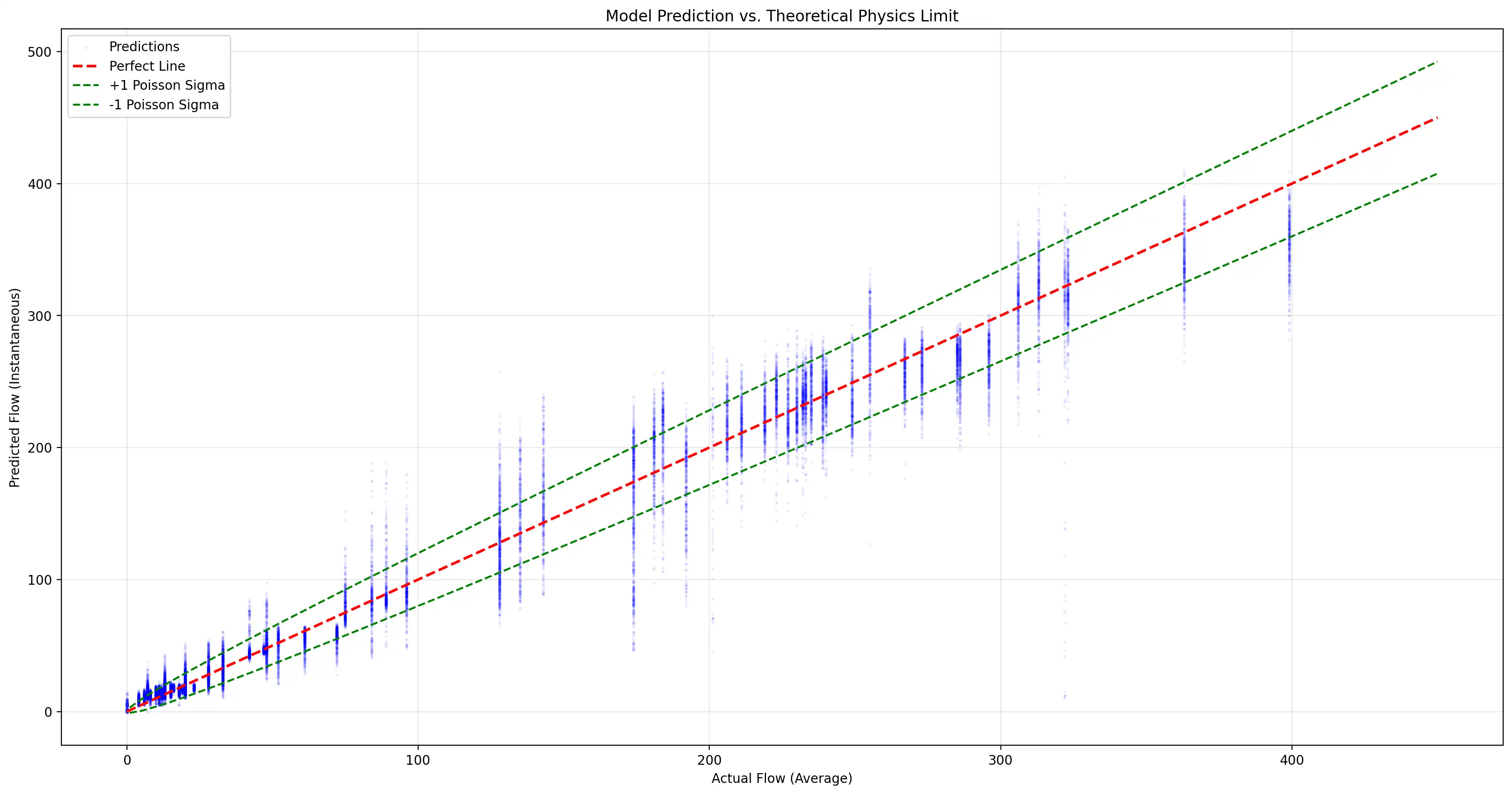

The model performs optimally in the medium-to-high range (~200–350 units/s), which is the primary operating range of the machine. At very high flow rates, systematic underestimation occurs due to signal saturation - a fundamental physical constraint.

At the optimal operating point, the system reaches errors below 1% - compared to approximately 5% for best-in-class alternatives.

The instantaneous RMSE of ~20 units/s is close to the Poisson noise floor of ~28 units/s - the irreducible variance inherent in any random discrete flow process. This confirms the model is extracting nearly all available information from the signal.

Key Takeaways

Edge Deployment

The trained model runs entirely on embedded hardware in real time, with no cloud dependency. This enables use in field conditions and removes any latency from remote inference.

Physics-Informed Design

Understanding the physical phase transition between flow regimes directly informed our model choice and feature engineering strategy - avoiding the pitfalls of fitting a linear model to a fundamentally non-linear system.

Near-Optimal Accuracy

The system operates close to the fundamental Poisson noise floor of the physical process, confirming that the feature set and architecture are well-matched to the problem.

Generalizable Methodology

Multi-channel feature extraction + ANN regression + temporal integration is directly applicable to any discrete unit flow estimation problem: granules, pellets, components, tablets - across industrial and agricultural contexts.

Got a similar challenge?

We specialise in edge machine learning for demanding industrial applications. Get in touch to discuss your project.

Contact Us